The AI stack is evolving faster than most teams can keep up with.

A year ago, the biggest challenge was choosing between GPT-4 and Claude. Today, enterprise AI teams are running five, ten, sometimes fifteen different models across production workflows, and discovering that managing them is a full-time job on its own.

That’s where the AI control plane comes in.

This guide covers everything: what an AI control plane is, why traditional MLOps infrastructure isn’t enough, what to look for in a modern solution, and how Neptune’s Meta-AI Router serves as the AI control plane your team needs right now.

What Is an AI Control Plane?

An AI control plane is the orchestration layer that sits above your AI models and manages how tasks are routed, executed, monitored, and communicated across your entire AI infrastructure.

Think of it like this: if your AI models are engines, the AI control plane is the cockpit. It doesn’t do the flying; it decides which engine to use, monitors performance, handles failures, and reports outcomes.

A true AI control plane handles four core functions:

- Model routing: Directing tasks to the most appropriate AI model based on cost, capability, speed, and context

- Workflow execution: Running multi-step AI workflows with branching logic and error recovery

- Observability: Tracking performance, cost, latency, and outcomes across every model call

- Communication: Delivering results to the right person or system at the right time

Why the Old Approach No Longer Works

For years, teams used experiment trackers like Neptune.ai, MLflow, and Weights & Biases to manage their AI infrastructure. These tools were excellent at logging runs, comparing metrics, and storing model artifacts.

But they were built for a different era, the era of training one model at a time.

Today’s AI applications don’t train one model. They run many models simultaneously, each handling different tasks, in real time. The infrastructure challenge has fundamentally shifted:

| Capability | Old Experiment Trackers | AI Control Plane |

| Purpose | Log training runs | Orchestrate production AI |

| Model support | One model at a time | Multi-model simultaneously |

| Routing logic | None | Dynamic, context-aware |

| Workflow execution | No | Yes, with branching |

| Real-time monitoring | Post-hoc analysis | Live observability |

| Output delivery | Dashboard only | Email, SMS, Slack, WhatsApp |

| Production-ready | Limited | Core design principle |

The 5 Core Components of an AI Control Plane

1. Intelligent Model Router

The router is the brain of the control plane. It receives every incoming task and decides which model, or combination of models, should handle it.

A well-designed router considers multiple factors simultaneously:

- Task type: Is this code generation, summarization, reasoning, or something else?

- Cost constraints: Should GPT-4 Turbo be used, or will Claude Haiku handle it just as well?

- Latency requirements: Does this task need a sub-second response or is it batch-processable?

- Model availability: Is the primary model rate-limited or experiencing downtime?

- Historical performance: Which model has performed best on similar tasks?

Neptune’s Meta-AI Router handles all five dimensions in real time, routing across GPT-4, Claude, Gemini, Llama, and custom models without any manual configuration.

2. Workflow Execution Engine

Most real AI tasks aren’t single-step. They’re sequences: extract data, classify it, summarize it, validate the summary, format the output, and deliver it.

A workflow execution engine chains these steps together with conditional logic, parallel processing, and automatic retry on failure.

This is fundamentally different from simply calling an API. The execution engine manages state across steps, handles partial failures gracefully, and ensures every workflow completes, even when individual model calls fail.

3. Unified Observability Layer

Without a control plane, AI observability is fragmented. You might have dashboards in OpenAI’s platform, another in Anthropic’s console, logs in Datadog, and cost data in your cloud billing, all disconnected.

The observability component of an AI control plane unifies all of this:

- Total cost per workflow, not just per model call

- End-to-end latency across chained model calls

- Quality scores and output validation results

- Failure rates and automatic fallback triggers

- Token usage optimization recommendations

4. Policy and Governance Engine

As AI usage scales, governance becomes critical. Which teams are allowed to use which models? What’s the maximum cost per request? What data should never be sent to external models?

The governance layer enforces these rules automatically, without requiring engineering intervention on every request.

5. Output Communication System

AI workflows don’t end when the model responds. They end when the right person receives the right information at the right time.

A modern AI control plane includes built-in communication agents that can deliver results via email, SMS, WhatsApp, Slack, webhooks, or direct API responses, based on the context of each request.

This closes the loop that most AI infrastructure leaves open.

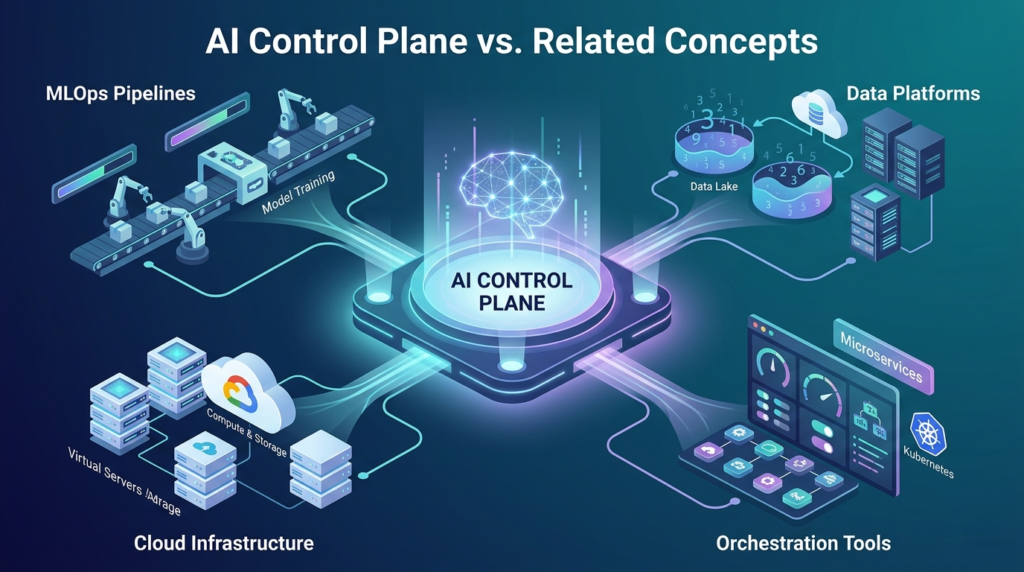

AI Control Plane vs. Related Concepts

There’s understandable confusion between AI control planes and similar concepts. Here’s how they differ:

| Concept | Primary Focus | Scope | Production-Ready? |

| AI Control Plane | Orchestrate & route across models | End-to-end AI operations | Yes |

| MLOps Platform | Train & deploy models | Model lifecycle | Partially |

| LLM Gateway | API routing & rate limiting | Request-level only | Yes (limited) |

| Experiment Tracker | Log training runs | Development phase only | No |

| AI Agent Framework | Build autonomous agents | Application layer | Varies |

Who Needs an AI Control Plane?

Not every organization needs a full AI control plane today. Here’s a practical guide:

You probably need one if:

- You’re running more than 2 AI models in production

- Your AI costs are unpredictable or hard to attribute to specific workflows

- Engineers are spending time manually routing requests between models

- AI failures are causing downstream process failures with no automatic recovery

- Business stakeholders need AI outputs, but aren’t getting them reliably

You might not need one yet if:

- You’re still in early experimentation with a single model

- Your AI use case is a single, simple API call

- Your team is fewer than 3 engineers with minimal AI workloads

Neptune as Your AI Control Plane

Neptune was built specifically to serve as the AI control plane for modern enterprise teams.

Unlike tools that added control plane features as an afterthought, Neptune was designed from day one around three pillars:

Pillar 1: Route

Neptune’s Meta-AI Router acts as the intelligent dispatcher for your entire AI infrastructure. Every incoming task is analyzed and routed to the optimal model, whether that’s GPT-4o, Claude 3.5, Gemini 1.5, Llama 3, or a custom fine-tuned model.

Routing decisions are made based on task type, cost targets, latency requirements, and live model performance data. When a model underperforms or goes offline, routing adjusts automatically, no engineer intervention required.

Pillar 2: Execute

Neptune’s workflow engine handles complex, multi-step AI operations end-to-end. You define the workflow once; Neptune executes it reliably across thousands of requests.

This includes sequential and parallel execution, conditional branching, automatic retries, and state management across long-running workflows. Whether you’re automating a simple summarization pipeline or a complex research-to-report workflow, Neptune executes it without custom infrastructure.

Pillar 3: Communicate

The final mile of every AI workflow is communication. Neptune’s communication agents take workflow outputs and deliver them to the right destination: email, SMS, WhatsApp, Slack, or any webhook endpoint.

This isn’t a generic notification system. Neptune’s communication layer is context-aware, formatting outputs appropriately for each channel and recipient type.

Implementation: Getting Started with an AI Control Plane

Most teams follow a three-phase approach when implementing an AI control plane:

Phase 1: Audit Your Current AI Stack (Week 1)

- List every AI model currently in use across your organization

- A map of which workflows depend on each model

- Identify current failure points and manual routing logic

- Calculate current AI infrastructure costs by model and workflow

Phase 2: Connect and Configure (Weeks 2-3)

- Connect your existing models to the control plane via API

- Define routing policies for each workflow type

- Set up observability dashboards and alerting

- Configure governance rules for cost, data privacy, and model access

Phase 3: Automate and Optimize (Weeks 4+)

- Enable automatic routing optimization based on performance data

- Add communication agents for key workflow outputs

- Expand workflow automation to additional use cases

- Review cost and performance reports to identify optimization opportunities

With Neptune, most teams complete Phase 1 and 2 within two weeks and see measurable cost reductions within the first month.

AI Control Plane Best Practices

Based on what high-performing AI teams do consistently:

- Start with your highest-volume workflows: the ROI on routing optimization is largest there

- Define cost targets per workflow before configuring routing: this prevents runaway spend as usage scales

- Monitor model quality, not just cost: the cheapest route isn’t always the right one

- Build communication into workflows from day one: retrofitting it later is harder than starting with it

- Review routing decisions weekly in early months: the data often reveals surprising optimization opportunities

Frequently Asked Questions

What’s the difference between an AI control plane and an API gateway?

An API gateway handles request routing, rate limiting, and authentication at the infrastructure level. An AI control plane goes much further; it understands the semantic content of AI tasks, routes based on capability and performance, manages multi-step workflows, and handles output delivery. An API gateway is plumbing; an AI control plane is intelligence.

Can I use an AI control plane with models I’ve fine-tuned myself?

Yes. Neptune supports custom model endpoints alongside managed APIs like OpenAI and Anthropic. Your fine-tuned models participate in routing decisions just like any other model.

How does an AI control plane handle model failures?

Neptune’s routing layer includes automatic failover. If a primary model is unavailable or returns an error, the router instantly redirects to the next-best option based on your configured fallback hierarchy. Most failures are invisible to end users.

Does using an AI control plane increase latency?

Routing decisions in Neptune add approximately 20-50ms to each request, usually negligible for enterprise workflows. For latency-critical applications, Neptune’s caching layer can reduce effective latency by serving cached responses for repeated or near-identical queries.

Is an AI control plane secure enough for sensitive enterprise data?

Neptune’s governance engine lets you define data classification policies that prevent sensitive data from being routed to specific models. You can enforce that certain task types always use your on-premise or private cloud models, regardless of routing optimization.

How does Neptune compare to building a custom AI control plane?

Building an equivalent system from scratch typically takes 3-6 months of engineering time and requires ongoing maintenance as models and APIs evolve. Neptune provides the same capabilities out of the box, with continuous updates as the AI model landscape changes.

The Bottom Line

The AI control plane is rapidly becoming as fundamental as the database layer or the API gateway, an infrastructure that every serious AI-powered organization needs, whether they’ve named it that or not.

Teams that implement this layer early gain compounding advantages: lower costs, better reliability, faster iteration, and the ability to adopt new models without rebuilding their infrastructure.

Neptune was built to be exactly this layer, not an experiment tracker, not a monitoring tool, but a true AI control plane that routes, executes, and communicates at production scale.